The Big Tech cartel’s social media keystones, Twitter and Facebook, made their claim to fame as the mainstream avenue in which citizens, governments, and businesses alike communicate with each other, broadcast warnings and instructions, and advertise. Today, as these platforms have become rife with both censorship and a dense one-voice, left-wing, establishment narrative, what if the accounts you see, the comments they post, and even the profile pictures they use, are actually just generated by artificial intelligence?

That is exactly the case when it comes to pro-Chinese Communist Party accounts on Twitter and Facebook, according to an Aug. 4 report by the Centre for Information Resilience (CIR) titled Analysis of the Pro-China Propaganda Network Targeting International Narratives.

The 80-page report, composed mostly of pictorial evidence and real-world examples, should be considered a must-read for any social media user, especially those concerned about the veracity and integrity of the messages they read and the responses they receive on the platforms.

The CIR says they have uncovered a “Coordinated attempt, using a mix of fake, real and stolen social media accounts, to distort international perceptions on significant issues, elevate China’s reputation amongst its supporters, and discredit claims critical of the Chinese Government.”

They found the AI bot campaign operates in both the English and Chinese languages and sought to influence public opinion by posing as organic, real users on issues such as gun control, racial justice, COVID-19, and the Xinjiang genocide.

Success

You are now signed up for our newsletter

Success

Check your email to complete sign up

The CCP’s Twitter botnet was found to use accounts that posed as people by utilizing machine-learning generated profile pictures through StyleGAN. CIR explained the technology in the following way: “StyleGAN, short for Style Generative Adversarial Networks, is a type of machine learning framework, where a technique trained on a dataset is able to generate new data with the same statistics as the dataset. For StyleGAN networks, the technique enables brand new faces to be created that don’t exist in real life.”

Some of the biggest tell the botnet was using synthetic profile pictures was the subtle blurring of facial features, places where heads met backgrounds, teeth alignment, or where glasses met faces. But the biggest red flag was that the eyes of each photo were all on the same horizontal axis, which researchers showed to be definitively consistent across dozens of accounts.

They noted that the botnet also utilized accounts with “more authentic appearing images,” including anime images and accounts that were clearly once owned by a person but had been “repurposed” to serve as a propaganda tool, likely through either a purchased account or a compromised password.

CIR noted their investigation found that CCP botnets were still using similar characteristics to those found in reports between 2019 and 2021 by Graphika, Australian Strategic Policy Institute, Stanford, and others, such as utilizing text-heavy 4Chan-style images, a pro-China and anti-west sentiment, and patterns arising from both repurposed accounts and a division of labor between bots for posting and sharing.

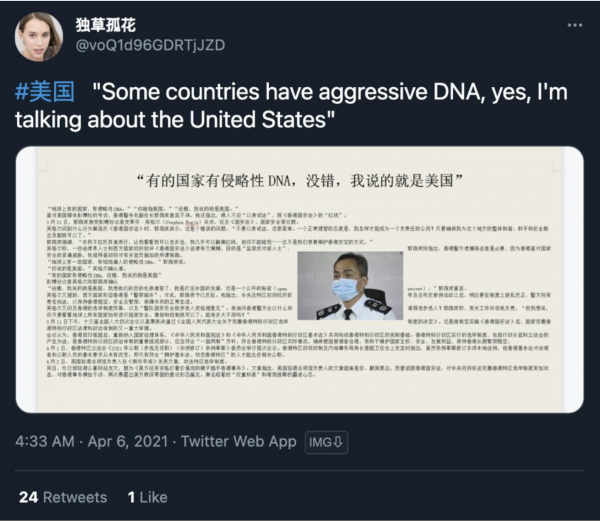

The team pulled data from Twitter’s API based on three hashtags to gather intelligence for its analysis over the course of a month:

- #香港 (Hong Kong);

- #美国 (United States); and

- #郭文贵 (Guo Wengui).

The team then cleaned and processed the data before using the Gephi data visualization platform to compile their findings. To discern between accounts likely to be bots and accounts likely to be real humans, CIR analyzed factors such as if it had a unique username, account metrics such as followers and following, age of the account, and retweet ratio, among others.

For example, several dozen bots comprising one network often took instructions from an account with the handle @corrinehartma10 and carried almost exclusively alphanumeric names such as @hollyca07911706, @ellafit29332445, and @tonyabe15414870.

The team found an almost identical pattern of text-based image posting, hashtag usage, account nomenclature, profile phototype, and propaganda narrative-based responses on both Facebook and the YouTube comment section.

A heavily utilized approach employed by the botnet’s makers was for a tweet by an account devoted to posting to be retweeted by an array of zero-follower accounts with similar archetypal names, photos, and history, in order to ensure the propaganda, gained traction in Twitter’s algorithm and would have an opportunity to reach the eyes of real human users or go viral.

The investigation found on the topic of US gun violence and the Xinjiang genocide of Uyghur Muslims that the botnet followed the lead of CCP state-run media employee and official Party spokesperson accounts.

The report gives the specific example of the Xinjiang genocide of bots posting propaganda content aiming to deny and delegitimize the persecution of Uyghur Muslims similar to that retweeted by Ministry of Foreign Affairs spokesperson Zhao Lijian and Communist Party media outlet Global Times Editor-in-Chief Hu Xijin.

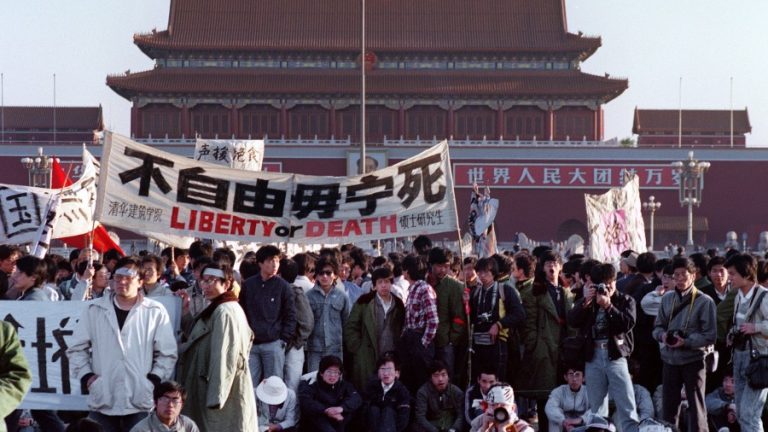

The pattern repeated through other topics such as that of Black Lives Matter and racial justice, India’s recent COVID-19 crisis, the Wuhan Lab Leak theory, and the Hong Kong democracy movement.