Researchers now saying that popular artificial intelligence (AI) platform GPT is indeed performing poorer on tasks compared with when it was first released last November, despite assurances by its developer OpenAI.

Researchers from Stanford and Berkeley have found that over a period of just a few months, both GPT-3.5 and GPT-4 — the systems that power ChatGPT — have been producing responses with a plummeting rate of accuracy.

The findings are detailed in a paper that is yet to be peer-reviewed and affirms what many of the AI’s users have been suspecting for some time.

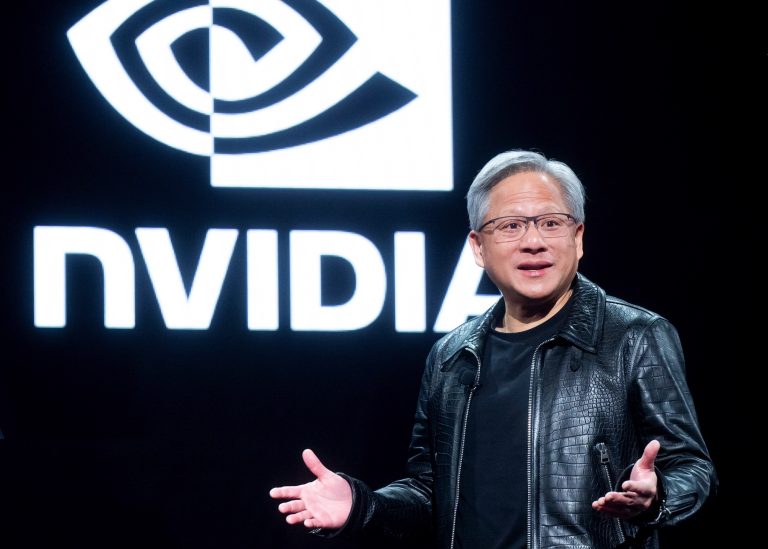

The observed decline in the AI’s performance has prompted OpenAI’s president of product, Peter Welinder, to attempt to dispel the rumors.

“No, we haven’t made GPT-4 dumber. Quite the opposite: we make each new version smarter than the previous one,” he tweeted on July 13, adding that, “Current hypothesis: When you use it more heavily, you start noticing issues you didn’t see before.”.

Success

You are now signed up for our newsletter

Success

Check your email to complete sign up

Welinder then told users, “If you have examples where you believe it’s regressed, please reply to this thread and we’ll investigate.” He also told users that the free version, GPT-3.5, has improved as well.

READ MORE:

- Amazon Alexa Recommends Deadly Challenge to 10-Year-Old Girl

- Scientists Used Artificial Intelligence to Discover 40,000 New Possible Chemical Weapons in Just Six Hours

- Google Is Trying to Create a ‘Digital God,’ Elon Musk Says

‘Substantially worse’

The Stanford and Berkeley researchers, however, believe the AI is performing worse than it did before on certain tasks.

“We find that the performance and behavior of both GPT-3.5 and GPT-4 vary significantly across these two releases and that their performance on some tasks have gotten substantially worse over time,” adding that they question the claim that GPT-4 is getting stronger.

“It’s important to know whether updates to the model aimed at improving some aspects actually hurt its capability in other dimensions,” the researchers wrote.

The researchers, including Lingjiao Chen, a computer-science PH.D, and James Zou, one of the authors of the research, gave the AI a relatively simple task: identify prime numbers.

They found that in March this year the AI was successful in identifying prime numbers 84 percent of the time, however when tasked with the same operation the following June the AI’s ability to correctly identify prime numbers fell to just 51 percent.

The researchers presented the AI with a total of eight different tasks to perform and found that GPT-4 became worse at six of them whereas GPT-3.5 improved on six of them, but still did not perform as well as its more advanced sibling GPT-4 on all tasks.

They also found that in March GPT-4 would answer 98 percent of the questions posed to it, however by June it gave answers to only 23 percent of queries, telling users that their prompt was too subjective and as an AI it didn’t have any opinion.

READ MORE:

- Iowa Farmers Accuse China of Stealing America’s Agricultural Technology

- Congress Questions BlackRock, MSCI for Investing in Chinese Military and State Companies

- Intel Announces Launch of ‘Innovation Hub’ in Shenzhen

‘AI drift’

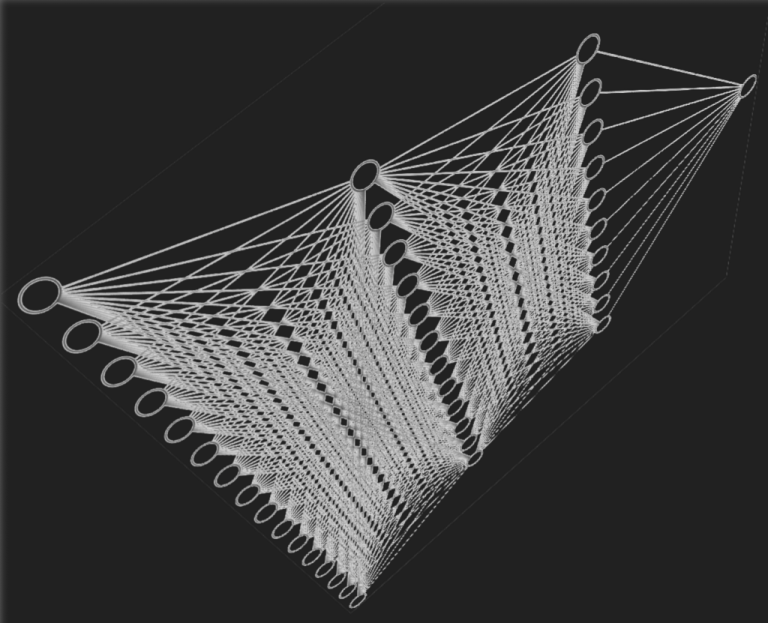

Experts believe that GPT-4 and 3.5, which is the free version, may be experiencing what researchers refer to as “AI drift.”

Drift occurs when large language models (LLMs) behave in bizarre ways that stray from the original parameters, confounding their developers.

This may be the result of developers implementing changes intended to improve the AI, but which end up having a detrimental impact on other features.

This could account for the deterioration in performance that researchers have found.

For instance, the researchers found that between March and the following June, GPT-4 performed worse at code generation, answering medical exam questions, and answering opinion prompts, all of which can be attributed to the AI drift phenomenon.

Zou told the Wall Street Journal, “We had the suspicion it could happen here, but we were very surprised at how fast the drift is happening.”

The findings could provide insight into how other AI platforms perform over extended periods of time. AI drift may be inevitable, as most LLMs are trained in a similar fashion, and will thus result in similar outcomes, according to the researchers.

The authors of the study wrote that they intend to update their findings and conduct “ongoing long-term study by regularly evaluating GPT-3.5, GPT-4 and other LLMs on diverse tasks over time.”