As human rights activists, backed by dozens of countries, call for an outright ban on the use of lethal autonomous weapons or “killer robots” ahead of high-level talks at the United Nations (UN) this week the Biden administration has rejected the idea of a ban and has instead proposed the establishment of a “code of conduct” for their use.

On Dec. 13, UN Secretary-General Antonio Guterres called for new rules addressing the use of autonomous weapons during the opening of The Convention on Certain Conventional Weapons that is being attended by 125 parties this week including the United States, China and Israel.

“I encourage the Review Conference to agree on an ambitious plan for the future to establish restrictions on the use of certain types of autonomous weapons,” Guterres said at the opening of the talks.

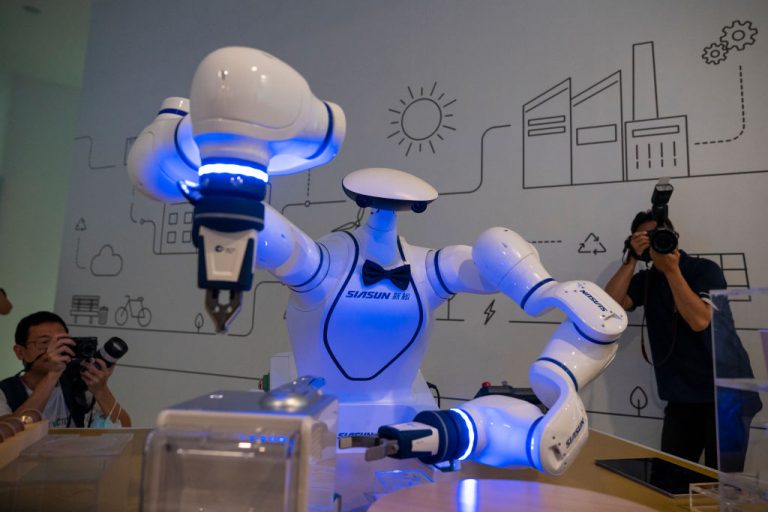

This marks the eighth year the UN has held talks to discuss limits on lethal autonomous weapons (LAWS) which are fully machine-controlled robots that rely on artificial intelligence (AI) and facial recognition and operate with little or no human intervention.

This year’s talks are particularly tense after a report in March indicated the first autonomous drone attack may have already occurred in Libya.

Success

You are now signed up for our newsletter

Success

Check your email to complete sign up

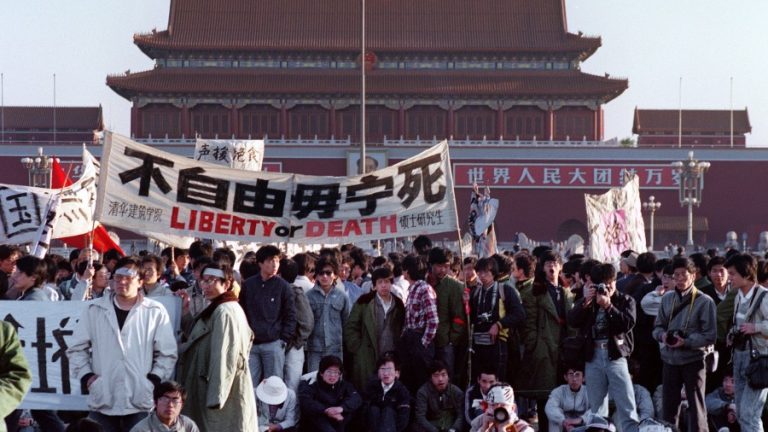

Several parties participating in the talks are calling for a total ban on LAWS including the government of Austria, international human rights advocacy organization Amnesty International and Stop Killer Robots, an advocacy group that boasts 180+ member organizations.

A petition was presented on Monday calling for countries to start negotiating an international treaty to address the technology and its use.

Clare Conboy of Stop Killer Robots told Reuters, “The pace of technology is really beginning to outpace the rate of diplomatic talks. This is a historic opportunity for states to take steps to safeguard humanity against autonomy in the use of force.”

Stop Killer Robots

According to the organization’s website, Stop Killer Robots believes “technology should be used to empower all people, not to reduce us – to stereotypes, labels, objects, or just patterns of 1’s and 0’s.”

The organization asserts that machines “don’t see us as people” and argues that killer robots don’t simply appear, they are created and thus are ultimately under human control.

Advances in AI and machine learning are removing the human factor from robot actions and now pose a threat to human rights across the globe, the advocacy organization says.

Robotics technology has been advancing at a blistering pace. Elon Musk famously tweeted in November 2017 “In a few years, that bot will move so fast you’ll need a strobe light to see it,” when commenting on Boston Dynamics latest demonstration of its tech.

While Musk’s prediction has yet to come to fruition robotics developers across the globe, seemingly weekly, post new advances that illicit both a sense of awe and terror.

Engineered Arts Ltd, a UK based robotics and artificial intelligence company that produces entertainment robots posted a jaw-dropping video this month of its latest humanoid design.

Engineered Arts claims that its new robot, Ameca, “is the world’s most advanced human shaped robot representing the forefront of human-robotics technology, “ and that it is “designed specifically as a platform for development into future robotics technologies.”

While advances of this kind stuns crowds, it’s the underlying technology it demonstrates that could be exploited by militaries or bad actors.

Amnesty International tweeted on Dec. 14, “Once thought to be just in the movies, killer robots are no longer a problem for the future. Drones, & other advanced weapons are being developed with the ability to choose and attack their own targets – without human control.”

Militaries are investing heavily in autonomous weapons

Militaries around the globe have been investing billions in the development of autonomous robots. The U.S. alone invested US$18 billion in autonomous weapons between 2016 and 2020.

Perhaps the most alarming emerging problem is not the high-end autonomous machines capable of violence but the low-end technologies that are easily accessible and simple to operate that open the door for bad actors with limited resources to unleash arbitrary violence on unsuspecting civilian populations.

Killer robots that are cheap, effective and almost impossible to contain will almost certainly be used by people outside of government control, including international and domestic terrorists, to advance their agendas.

The Kargu-2, manufactured and distributed by Turkish defense contractor STM, is a cheap quadcopter drone that operates both as a drone and a bomb. Easy to manufacture, the drone uses AI to find and track targets and was suspected of being used during the Libyan civil war to attack people.

In March 2020, a Kargu-2 reportedly autonomously attacked a person during a conflict between the Libyan government forces and a breakaway military faction, led by the Libyan National Army’s Khalifa Haftar, the Daily Star reported.

According to a report by New Scientist, “The lethal autonomous weapons systems were programmed to attack targets without requiring data connectivity between the operator and the munition: in effect, a true ‘fire, forget and find’ capability.”

Diplomats at this week’s talks think it is unlikely that any agreement on the use of killer robots will be reached and expect that countries may be forced to move talks to another forum either inside or outside the United Nations.